⌛ This article is now 5 years and 4 months old, a quite long time during which techniques and tools might have evolved. Please contact us to get a fresh insight of our expertise!

Elasticsearch the right way in Symfony

You are building an application with Symfony – good choice 😜 – but now you need some full-text search capabilities? This article is for you.

Multiple options are available:

- going full RDMS and using FULLTEXT indexes – yes it works;

- using a third party SaaS like Algolia or Elastic App Search;

- going all the way with a dedicated search database like Solr, MeiliSearch, Sonic, or Elasticsearch (be it SaaS or self-hosted).

This last one is today’s choice of predilection for multiple reasons:

- complete control over the indexation and search for tailored search;

- very easy to customize;

- no ties with third party;

- hype 👌;

- controlled costs;

- bend the tool to your application’s needs, not the other way around;

clear and simple licensing;- great commercial support.

Choosing Elasticsearch today also means embracing a large set of tools and a large community of users, so that’s what we are going to talk about today. This article will not cover search relevance though.

Section intitulée when-do-i-need-elasticsearchWhen do I need Elasticsearch

Elasticsearch is an open-source Java full-text search and analytics engine. It works a lot like a NoSQL database exposed over HTTP.

It scales very well, it is fast and you get highly relevant results practically out of the box.

- you don’t need to handle “big-data-like” load to justify it, hundreds of documents are also OK;

- it allows you to search any type of document with domain specific tuning;

- it can compute a lot of statistics about your documents in real time;

- there is a lot of sugar and additional features like highlight, partial search, index management…

That all comes with a cost. Elasticsearch is not that easy to implement or host. The learning curve will trick you into thinking it’s working great, until you realise you have no idea what you are doing.

Don’t use it if:

- you can do your search directly via your RDMS FULLTEXT indexes;

- you don’t have the time to learn about analyzer, Query DSL, shard, master election… Use a SaaS!

- you don’t have a budget: Elasticsearch is only a tool, not a solution. That means you must invest in architecture, index management, hosting, development, search relevance tuning…

Section intitulée the-php-sideThe PHP side

Elasticsearch needs two things:

- HTTP for transport;

- JSON for data.

PHP is well equipped in those areas and your basic calls to Elasticsearch could look like this:

$results = \json_decode(

file_get_contents('http://localhost:9200/_search'),

true

);Time to look for a dedicated client because we cannot ship this to production.

There are a multitude of roads you could take here. You probably heard of FOSElasticaBundle, but what is it?

Section intitulée a-rel-nofollow-noopener-noreferrer-href-https-github-com-friendsofsymfony-foselasticabundle-foselasticbundle-aFOSElasticBundle

It’s a bridge between two well known libraries:

- Elastica: used to communicate with Elasticsearch, handle HTTP and queries;

- Doctrine: used to handle database, entities, SQL queries.

One is for Elasticsearch, the other is for RDMS.

With the Bundle, you can replicate what is stored in your database inside your Elasticsearch cluster, and get Doctrine entities objects (managed!) back when you search.

Version 6 (currently in Beta) adds support for Messenger, Elasticsearch 7 and Symfony 5.

Section intitulée a-rel-nofollow-noopener-noreferrer-href-https-github-com-ruflin-elastica-elastica-aElastica

As described earlier, Elastica is a library to talk with Elasticsearch. What’s great with it is that it’s fully object oriented! You will never have to write associative arrays queries.

It’s the main selling point! It’s also very flexible, well maintained and based on the official client. There are some exceptions to this, like the logger or the HTTP client but it’s a work in progress.

Section intitulée a-rel-nofollow-noopener-noreferrer-href-https-github-com-elastic-elasticsearch-php-official-client-aOfficial client

The Elastic company is providing an official client for Elasticsearch. It handles all the API endpoints, the HTTP communications, load balancing… All the low level aspects. Everything is sent via associative arrays, but the documentation is really great.

Section intitulée a-rel-nofollow-noopener-noreferrer-href-https-github-com-jolicode-elastically-elastically-aElastically

Disclaimer : I’m the author of this library.

Elastically (for Elastica-ally, your best buddy when working with Elastica directly) aims to help developers to quickly implement their own Elastica-based search.

So it’s just an extended Elastica Client with more capacities, and strongly opinionated choices like being DTO-first for the data or forcing aliased / versioned indices on you.

Basically it provides our best practices as a library.

Section intitulée what-to-choose-thenWhat to choose then?

Every project is different and you may have specific criterias, but here is a basic approach to compare all those libraries:

| Choice | Pros | Cons |

|---|---|---|

| FOSElasticaBundle | No code needed Easy to use Battery included Strong Symfony integration Opinionated |

Release delay Hard to customize / bend to your needs Opinionated |

| Elastica | Awesome Objects Active development |

Documentation |

| Official Client | Great documentation Active development Always up to date |

Associative arrays Low level |

| Elastically | Elastica on steroïds Symfony Ready Opinionated |

Opinionated Glue code needed |

We found that most of the time, in our projects (we build custom applications for a large diversity of clients) we need full control of the implementation. So using FOSElasticaBundle introduces too much “override” code and we end up rewriting too much of it.

Using the official client directly is also not our recommendation because it makes code reading and writing really hard since everything is an associative array (visual debt).

Using Elastica directly is the good option, as you get both the official client with its deep knowledge of all the API and the awesome object abstraction on top of it.

Finally we use Elastically because Elastica is “from scratch”, but there are some components we always write no matter what:

- an IndexBuilder, capable of creating new Index with the proper mapping and aliases;

- an Indexer, capable to send documents in an Index;

Elastically provides that layer on top of Elastica to help implement our own Search, so for the purpose of this paper we are going to use Elastically.

Section intitulée best-practices-to-followBest practices to follow

There is a common set of best practices I think you should follow when building a search engine with Elasticsearch, no matter what framework or language you use.

Section intitulée use-explicit-mappingUse explicit mapping

Always use explicit mapping for your indices (unless you don’t know your field names – that’s the only valid usage of dynamic mapping). By default everything is dynamic and that will lead to disasters.

Let’s see an example. If I index users and the first username is “July 20, 1969” (probably a fan of the NASA Moon landing) and the second one is anything else:

PUT space_club/_doc/1

{

"username": "1969-07-20"

}

PUT space_club/_doc/2

{

"username": "Damin0u"

}I will get this exception:

failed to parse field [username] of type [date] in document with id '2'. Preview of field’s value: 'Damin0u’

The internal type for username is date, because the first document kinda says so.

At least, with this example, there is an exception: if you index the numbers 42 and then 42,8 both are going to be stored as long without any warning. You just lost 0.8 of something!

To disable the dynamic mapping you must add this option on your indexes:

mappings:

dynamic: false

properties:

foo: { type: text }Oh and yes, I recommend writing all mappings and analyzer settings in YAML or similar. JSON is not for humans.

Section intitulée use-aliasesUse aliases

An Elasticsearch Index cannot be renamed or its mapping changed. So when the time comes to edit the mapping or analyzer you need to recreate the Index.

Deleting and creating the Index is a process during which no search query can happen so… you will have a downtime. Unless you use aliases!

Name your indexes with a version number or a date, and put an alias with the name your application knows: that way all searches and indexations are done on the alias.

When a new mapping needs to be done, create a new Index, populate it, and move the alias: boom, no search downtime.

This is the default behavior in Elastically.

// Class to build Indexes

$indexBuilder = $client->getIndexBuilder();

// Create the Index in Elasticsearch

// The real Index will be called "beers_2020_11_01"

$index = $indexBuilder->createIndex('beers');

// Add a "beers" alias to this new Index to allow searches

$indexBuilder->markAsLive($index, 'beers');Section intitulée use-dtoUse DTO

Data Transfer Object should be used anytime you need to transfer data in and out of a system. At least that’s what I think: deep associative arrays are not easy to work with, but a DTO is typed, easy to share in the code, you always know what are the properties, it’s auto-documented, you can use strong typing on properties…

So instead of indexing:

$product = ['name' => 'Lego Minifig of Geralt of Rivia', 'price' => 3300];You will create an object:

$product = new Product();

$product->setName('Lego Minifig of Geralt of Rivia');

$product->setPrice(3300);Then when Elastically will index this product, it will serialize it with the appropriate tools (Symfony ObjectNormalizer by default) to give Elasticsearch the proper JSON representation.

And when Elastically will decode search results, it will deserialize the JSON string to a Product object!

Section intitulée use-async-for-real-time-updatesUse async for real-time updates

For consistency reasons it’s very important to propagate any change to a record in your relational database to your Elasticsearch index.

To do so, you could index the new object right away, in your services or controller for example, but that’s not going to be robust!

There are multiple arguments against synchronous indexing:

- Elasticsearch is asynchronous: you can index a document and it’s not going to be available for search anyway! There is a “refresh” process in the background happening every 1 second by default. If you need to force a refresh every time you change an entity, I hope you don’t have a lot of request per seconds;

- HTTP is slow: opening an HTTP connection, running the JSON to it, waiting for the reply – those tasks are going to slow down your application. Elasticsearch indexing response times are good but can fluctuate a lot depending on the load, the network…;

- Loss of update: what if the cluster is down for a minute? Will you rollback the database change for consistency or will you hide it under the rug and win a gap in the search data?

By going asynchronous you prioritize quality of service and resiliency. Having your search page broken for a minute is bad, but not as bad as a 10 second page load when editing an entity.

Using asynchronous indexing in Symfony is easy thanks to the Messenger component.

Elastically provides handlers and Message for this:

$bus->dispatch(new IndexationRequest(Product::class, '42'));Section intitulée use-only-bulk-for-indexingUse only Bulk for indexing

By always using the Bulk API for all indexing operations, you only have one method to implement. Otherwise you would have the Document PUT and the Bulk POST in your code, introducing two code paths that could diverge. For example a developer could add a pipeline to the Document PUT and forgot to add it to the Bulk.

Just use Bulk. Even for one Document. Less code means less bugs.

Section intitulée use-elasticsearch-for-the-view-dataUse Elasticsearch for the view data

To display your search results page, you have two options:

- get the Document ids from Elastica, query the database and pass entities to the view, as you probably do everywhere else;

- just give the DTO to the view.

Which one is better? 😇

Of course that means you will sometimes need to add properties to the DTO that are only there for display (like an image URL for example), and that’s perfectly fine!

In fact the fields you can search on are only the one you map. So if there are “view only” properties in your DTO, don’t add them to the mapping and they will not be indexed.

Once you’ve done this for the search result page… why not use this beautiful DTO to display every page of the application?! 😊

Section intitulée use-symfony-httpclientUse Symfony HttpClient

Since Symfony 4.3, there is an official HttpClient you can leverage in all your HTTP communications.

What’s nice about it is that you get a profiler panel, clean logs and consistency across your project codebase.

Elastica allows us to use any HTTP Client implementing Elastica\Transport\AbstractTransport, so the only thing we have to implement is a transport for the Symfony client.

Elastically provides this transport class.

Section intitulée add-functional-tests-for-search-relevanceAdd functional tests for search relevance

Relevance is subjective, and search or mapping configuration can alter it a lot. You will implement it wrong in the first place, fix it, break it again, implement regression when trying to improve some edge cases… Trust me on this, you need to test relevance on a well known set of documents.

Your database fixtures should be replicated to Elasticsearch and you should have test cases for search. It doesn’t have to be strict, just make sure that when you search for an exact product title, it appears in the top 3 results!

Section intitulée let-s-start-workingLet’s start working

First thing is to install the search engine locally, I recommend using Docker of course.

With docker-compose:

services:

elasticsearch:

image: docker.elastic.co/elasticsearch/elasticsearch:7.9.3

environment:

- cluster.name=docker-cluster

- bootstrap.memory_lock=true

- discovery.type=single-node

- "ES_JAVA_OPTS=-Xms512m -Xmx512m" # 512mo HEAP

ulimits:

memlock:

soft: -1

hard: -1

ports:

- 9200:9200Then you need tools to talk to Elasticsearch, because curl is not a proper development environment!

TIPS! If you name your Elasticsearch service “elasticsearch” and expose port 9200, the Symfony binary will automatically detect it and inject the proper environment variables!

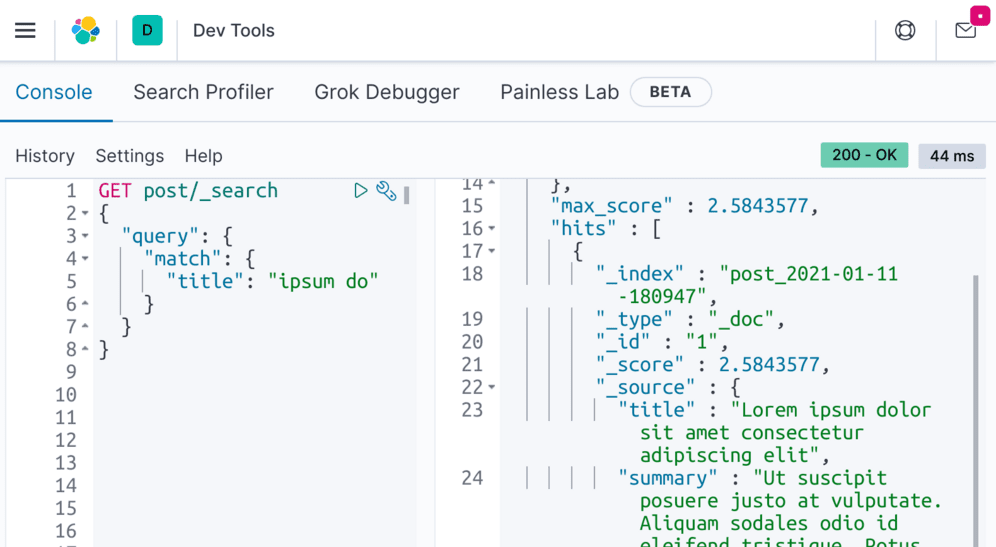

Use Kibana, it’s your PHPStorm for Elasticsearch, include it in your docker-compose file.

kibana:

image: docker.elastic.co/kibana/kibana:7.9.3

environment:

ELASTICSEARCH_URL: http://elasticsearch:9200

depends_on:

- elasticsearch

ports:

- 5601:5601All you have to know is that you should use the same version number for both.

Then run this command in a terminal:

$ docker-compose upHeads up to http://localhost:5601/ and play with the Dev Tools, create indexes, push documents, run searches. This is your new best friend!

PS: you don’t work with Docker? No worries, Elasticsearch is distributed for all systems via official packets, Windows installer, recipes for orchestrator, plain zip archive…

Section intitulée putting-it-all-togetherPutting it all together

We are going to implement Elasticsearch on top of the Symfony Demo as a “real world” application.

This application is a simple blog with a backend. We have user accounts, posts and comments stored in a relational database. Being able to search for those means duplicating that data in a search engine, namely Elasticsearch.

Let’s start with our dependencies:

$ composer require symfony/messenger symfony/http-client jolicode/elasticallySection intitulée the-dtoThe DTO

Then we are going to create a Post DTO that will hold everything we want to search for:

- the post title of course;

- the post content;

- the post comments;

- the author names…

We are going to denormalize the related entities into one unique object:

namespace App\Model;

class Post

{

public $title;

public $summary;

public $authorName;

public $slug;

/**

* @var array<PostComment>

*/

public $comments = [];

/**

* @var \DateTime|null

*/

public $publishedAt;

}And the PostComment children:

namespace App\Model;

class PostComment

{

public $content;

public $authorName;

}We will need some way to build this Post model from a Post entity. To do that you could use an Automapper like this one.

But for this example we are going to add a method on the entity:

public function toModel(): \App\Model\Post

{

$model = new \App\Model\Post();

$model->title = $this->title;

$model->authorName = $this->author->getFullName();

$model->publishedAt = $this->publishedAt;

$model->slug = $this->slug;

$model->summary = $this->summary;

foreach ($this->comments as $comment) {

$postComment = new PostComment();

$postComment->content = $comment->getContent();

$postComment->authorName = $comment->getAuthor()->getFullName();

$model->comments[] = $postComment;

}

return $model;

}Section intitulée elastically-setupElastically setup

Then we need to set up Elastically and build the mapping, index and send the document in it.

Let’s add an elastically.yaml file:

services:

_defaults:

autowire: true

autoconfigure: true

JoliCode\Elastically\Transport\HttpClientTransport: ~

JoliCode\Elastically\Messenger\IndexationRequestHandler: ~

JoliCode\Elastically\Client:

arguments:

$config:

host: '%env(ELASTICSEARCH_HOST)%'

port: '%env(ELASTICSEARCH_PORT)%'

transport: '@JoliCode\Elastically\Transport\HttpClientTransport'

elastically_mappings_directory: '%kernel.project_dir%/config/elasticsearch'

elastically_index_class_mapping:

post: App\Model\Post

elastically_serializer: '@serializer'

elastically_bulk_size: 100

$logger: '@logger'

JoliCode\Elastically\Messenger\DocumentExchangerInterface:

alias: App\Elasticsearch\DocumentExchanger

framework:

messenger:

transports:

async: "%env(MESSENGER_TRANSPORT_DSN)%"

routing:

# async is whatever name you gave your transport above

'JoliCode\Elastically\Messenger\IndexationRequest': asyncThis configuration reference the %kernel.project_dir%/config/elasticsearch directory for mapping storage:

# config/elasticsearch/post_mapping.yaml

settings:

number_of_replicas: 0

number_of_shards: 1

refresh_interval: 60s

mappings:

dynamic: false

properties:

title:

type: text

analyzer: english

fields:

autocomplete:

type: text

analyzer: app_autocomplete

search_analyzer: standard

comments:

type: object

properties:

content:

type: text

analyzer: english

authorName:

type: text

analyzer: englishWe also need a custom analyzer for partial matching, so we add it:

# config/elasticsearch/analyzers.yaml

filter:

app_autocomplete_filter:

type: 'edge_ngram'

min_gram: 1

max_gram: 20

analyzer:

app_autocomplete:

type: 'custom'

tokenizer: 'standard'

filter: [ 'lowercase', 'asciifolding', 'elision', 'app_autocomplete_filter' ]Right now, we can work with the JoliCode\Elastically\Client service!

Section intitulée the-create-index-commandThe Create Index command

To create an index and populate it with the data, I usually build a single purpose command. This command is responsible for:

- creating a new index with my mapping;

- sending all the data inside;

- moving aliases;

- deleting old indices.

But with Elastically, this procedure is entirely up to you so you can tweak and change things based on your project needs.

Here is the command code:

namespace App\Elasticsearch\Command;

use App\Repository\PostRepository;

use Elastica\Document;

use JoliCode\Elastically\Client;

use Symfony\Component\Console\Command\Command;

use Symfony\Component\Console\Input\InputInterface;

use Symfony\Component\Console\Output\OutputInterface;

class CreateIndexCommand extends Command

{

protected static $defaultName = 'app:elasticsearch:create-index';

private $client;

private $postRepository;

protected function configure()

{

$this

->setDescription('Build new index from scratch and populate.')

;

}

public function __construct(string $name = null, Client $client, PostRepository $postRepository)

{

parent::__construct($name);

$this->client = $client;

$this->postRepository = $postRepository;

}

protected function execute(InputInterface $input, OutputInterface $output): int

{

$indexBuilder = $this->client->getIndexBuilder();

$newIndex = $indexBuilder->createIndex('post');

$indexer = $this->client->getIndexer();

$allPosts = $this->postRepository->createQueryBuilder('post')->getQuery()->iterate();

foreach ($allPosts as $post) {

$post = $post[0];

$indexer->scheduleIndex($newIndex, new Document($post->getId(), $post->toModel()));

}

$indexer->flush();

$indexBuilder->markAsLive($newIndex, 'post');

$indexBuilder->speedUpRefresh($newIndex);

$indexBuilder->purgeOldIndices('post');

return Command::SUCCESS;

}

}You can run this command:

$ ./bin/console app:elasticsearch:create-index

# or with the Symfony binary

# $ symfony console app:elasticsearch:create-indexThat’s all there is to it, our data is properly indexed.

Section intitulée the-search-resultsThe search results

Inside this demo, the search results are displayed by the \App\Controller\BlogController::search action.

By using JoliCode\Elastically\Client, we can use the Elastica search object graph and get back our Post DTO properly hydrated.

- $foundPosts = $posts->findBySearchQuery($query, $limit);

+ $searchQuery = new MultiMatch();

+ $searchQuery->setFields([

+ 'title^5',

+ 'title.autocomplete',

+ 'comments.content',

+ 'comments.authorName',

+ ]);

+ $searchQuery->setQuery($query);

+ $searchQuery->setType(MultiMatch::TYPE_MOST_FIELDS);

+ $foundPosts = $client->getIndex('post')->search($searchQuery);

$results = [];

- foreach ($foundPosts as $post) {

+

+ foreach ($foundPosts->getResults() as $result) {

+ /** @var \App\Model\Post $post */

+ $post = $result->getModel();

+

$results[] = [

- 'title' => htmlspecialchars($post->getTitle(), ENT_COMPAT | ENT_HTML5),

- 'date' => $post->getPublishedAt()->format('M d, Y'),

- 'author' => htmlspecialchars($post->getAuthor()->getFullName(), ENT_COMPAT | ENT_HTML5),

- 'summary' => htmlspecialchars($post->getSummary(), ENT_COMPAT | ENT_HTML5),

- 'url' => $this->generateUrl('blog_post', ['slug' => $post->getSlug()]),

+ 'title' => htmlspecialchars($post->title, ENT_COMPAT | ENT_HTML5),

+ 'date' => $post->publishedAt->format('M d, Y'),

+ 'author' => htmlspecialchars($post->authorName, ENT_COMPAT | ENT_HTML5),

+ 'summary' => htmlspecialchars($post->summary, ENT_COMPAT | ENT_HTML5),

+ 'url' => $this->generateUrl('blog_post', ['slug' => $post->slug]),

];

}Here I’m creating the MultiMatch query directly in my controller for simplicity, but adopting a repository-like structure is better.

Section intitulée real-time-updatesReal-time updates

There are a couple of places where the Post can be edited so we need to implement an update processus for our search data.

The right way is to leverage Symfony Messenger to send the updates to a queue that will be processed in the background.

Our configuration already declares a Message named JoliCode\Elastically\Messenger\IndexationRequest, this is a built-in message in Elastically coming with its own Handler: \JoliCode\Elastically\Messenger\IndexationRequestHandler.

For this piece of code to work, we just need a \JoliCode\Elastically\Messenger\DocumentExchangerInterface implementation. The goal of this service will be to get an Elastica Document instance in exchange for a class and an ID (what the IndexationRequest is basically made of).

For our Post coming from Doctrine, we implement this:

namespace App\Elasticsearch;

use App\Model\Post;

use App\Repository\PostRepository;

use Elastica\Document;

use JoliCode\Elastically\Messenger\DocumentExchangerInterface;

class DocumentExchanger implements DocumentExchangerInterface

{

private $postRepository;

public function __construct(PostRepository $postRepository)

{

$this->postRepository = $postRepository;

}

public function fetchDocument(string $className, string $id): ?Document

{

if ($className === Post::class) {

$post = $this->postRepository->find($id);

if ($post) {

return new Document($id, $post->toModel());

}

}

return null;

}

}And this is it, now we can add the Messenger dispatch code everywhere we need:

$bus->dispatch(new IndexationRequest(\App\Model\Post::class, $post->getId()));

// for DELETE

// $bus->dispatch(new IndexationRequest(\App\Model\Post::class, $id, IndexationRequestHandler::OP_DELETE));In:

\App\Controller\Admin\BlogController::new;\App\Controller\Admin\BlogController::edit;\App\Controller\Admin\BlogController::delete

But wait! There are other places where we need to update the document in Elasticsearch. As we denormalized Comments and Authors inside the Post, every update to these entities must also trigger updates to the appropriate Post!

That’s the downside of denormalization.

We add the dispatch call to \App\Controller\BlogController::commentNew for the comment. For the Authors it’s a bit more complicated:

// \App\Controller\UserController::edit

// Reindex ALL posts from this user post or comment

// Move this to an event

$postIds = $postRepository->findPostIds($user);

$operations = [];

foreach ($postIds as $postId) {

$operations[] = new IndexationRequest(Post::class, $postId);

}

$bus->dispatch(new MultipleIndexationRequest($operations));This time we leverage the \JoliCode\Elastically\Messenger\MultipleIndexationRequest Message to group all the updates into one treatment.

Section intitulée adding-functional-testAdding functional test

Our search query and our mapping are quite simple but that’s no excuse to ignore search relevance tests.

The Symfony Demo already tests the search action, so a good first baby step could be to add search query and results checks based on the fixtures:

public function testAjaxSearch(): void

{

$client = static::createClient();

$client->xmlHttpRequest('GET', '/en/blog/search', ['q' => 'lorem']);

$results = json_decode($client->getResponse()->getContent(), true);

$this->assertResponseHeaderSame('Content-Type', 'application/json');

$this->assertSame('Lorem ipsum dolor sit amet consectetur adipiscing elit', $results[0]['title']);

$this->assertSame('Jane Doe', $results[0]['author']);

$client->xmlHttpRequest('GET', '/en/blog/search', ['q' => 'Nulla porta lobortis']);

$results = json_decode($client->getResponse()->getContent(), true);

$this->assertResponseHeaderSame('Content-Type', 'application/json');

$this->assertSame('Nulla porta lobortis ligula vel egestas', $results[0]['title']);

}Here for various search strings, we make sure a specific Post is first in the results. That way, if someone breaks the search, we will know.

Every search tuning example and any requirement from the product owner should also be added here.

Section intitulée final-wordsFinal words

This blog post and the conferences I’ve given on the subject (here, here, here and here) are the results of years of experience as a PHP developer building search engines with Elasticsearch. Working for a high variety of projects with different teams, skills and workload. You may not do this that way but that’s no reason to feel wrong – there is more than one good solution, there is no perfect one – that’s my best one at the moment and I hope you like it.

The Symfony Demo “Elasticsearch Edition” is visible here (branch elastically).

Commentaires et discussions

Log all the searches going through Elasticsearch

You are looking for a way to retrieve the full Query DSL sent by an application to Elasticsearch in order to debug or simply see what’s going on. This article got you covered. Sometimes we cannot inspect the HTTP…

par Damien Alexandre

Introducing Elastically, our Elastica Ally

Sorry for the pun 😅 In March, I got the chance to share my knowledge about Elasticsearch and PHP with hundreds of developers at Symfony Live Paris. While building this talk, I tried to make sense of all the PHP…

par Damien Alexandre

Nos articles sur le même sujet

Nos formations sur ce sujet

Notre expertise est aussi disponible sous forme de formations professionnelles !

Symfony

Formez-vous à Symfony, l’un des frameworks Web PHP les complet au monde

Elasticsearch

Indexation et recherche avancée, scalable et rapide avec Elasticsearch

Ces clients ont profité de notre expertise

Dans le cadre d’une refonte complète de son architecture Web, Expertissim a sollicité l’expertise de JoliCode afin de tenir les délais et le niveau de qualité attendus. Le domaine métier d’Expertissim n’est pas trivial : les spécificités du marché de l’art apportent une logique métier bien particulière et un processus complexe. La plateforme propose…

Axiatel à fait appel à JoliCode afin d’évaluer les développements effectués par la société. A l’issue d’un audit court, nous avons présenté un bilan de la situation actuelle, une architecture applicative cible, et nos recommandations en vue d’une migration en douceur vers le modèle applicatif présenté.

Studapart a fait appel à JoliCode pour surmonter plusieurs défis techniques que leur équipe interne ne pouvait gérer, faute de temps ou d’expertise. Nous avons travaillé ensemble pour améliorer leur processus d’onboarding des développeurs, résoudre des problématiques complexes de gestion des droits, et configurer correctement leurs certificats HTTP/HTTPS…