WebGL, the wow effect

Today our goal is to develop Immersive apps and experiments 🏆

Storytelling, UX, UI, animations and, above all, interactions are the main ingredients. Web APIs and JavaScript are very useful to build those. It allows access to gestures events from a phone or cameras and to control transitions between pages without any plugin. Interactions and animated UI need good display and calculation capacities from devices.

Today, we’ll have a look at a technology helping the most complex graphic renders: WebGL.

Section intitulée what-is-webglWhat is WebGL?

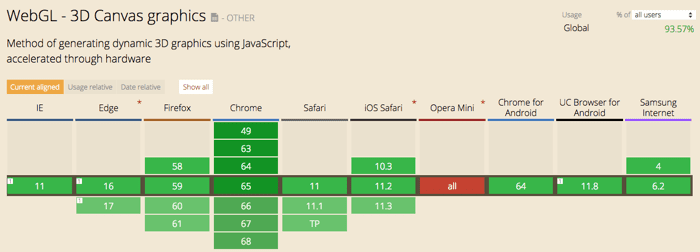

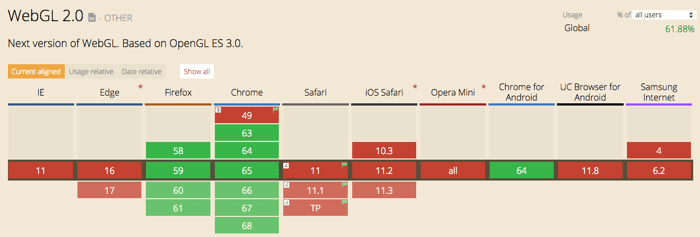

At first sight, WebGL is a JavaScript API allowing to implement interactive 3D graphics. Created by Khronos group, it uses OpenGl standard and run in a <canvas> HTML5 element. It is almost fully supported by modern browsers:

[flashback 💡] Do you remember Flash 3D rotation tools and Flash 3D websites? Well… it is similar but without the ownership, the need for browser plugins and with many more possibilities!

If you look closer, WebGL is not just about 3D graphics. This Web API allows us to communicate directly with the GPU (Graphics Processing Unit). It has its own programming language: GLSL. WebGL helps during the rasterization process of an image. It means the translation from vectorial data to matricial ones (displayable on a screen).

WebGL projects work on Desktop or Mobile. The limits are defined by the device’s calculation capacities. Indeed, if not optimized well (during conception or development), it can be heavy in terms of performance. WebGL works with image data, lights, shadows or post-processing. It represents a lot of calculations for the device. Everything needs to be fast and smooth for the user and that’s not the easiest part. We want to render a 3D scene on a 2D screen, everything does not have to be physically realistic. There are some visual tricks to improve performance.

3D on the Web has always been an immersive way to present a product or a service. Nowadays, with augmented or virtual reality, 3D starts to be more and more present on Web and mobile.

A second version of the technology (WebGL 2.0) has been developed. It is less supported than the first one for now, but things are moving fast!

For the following examples, we will be focusing on the version 1, basics stayed the same. Here is an article to see the evolutions between the two versions.

Section intitulée what-can-i-create-with-itWhat can I create with it?

WebGL can be applied, for example, to interactive music videos, games, data visualization, art, 3D design environments, 3D modeling of space, 3D modeling of objects, plotting mathematical functions, or creating physical simulations.

Currently, one of the main application is marketing support for a service, a movie or products (mainly in the luxury field). A « WebGl experiment » is a great asset in a digital campaign: it helps telling a story and allows the user to interact with it. Museums and cultural field had an early interest in this area, they built a lot of digital installations and apps around it.

The youth of the technology permits its originality. Most of the time linked with a touch of gamification, WebGL helps 3D on the Web to rise. The technology is often seen as an experimental one, needing really fast devices but make no mistake, you can get some pretty badass results. Good workflows and libraries are getting strong and reliable.

Here are some examples of WebGL projects. It can be a full 3D concept or just tiny animations. In every possibility, we can see the immersive effect.

Section intitulée full-webgl-gamesFull WebGL games

Section intitulée datavizualisationDatavizualisation

Section intitulée webgl-backgrounds-an-detailsWebGL backgrounds an details

Section intitulée home-page-and-transitionsHome page and transitions

Those examples are just a glimpse of what can be done. I found most of them in the WebGL awwwards list but there are others sources (chrome experiments, three.js, webdesigntrends).

WebGL involves everyone, from the thinking process to the production. It’s a team effort (as always, isn’t it?). It needs testing, communication and prototyping. Creative developers are often linked to this kind of project.

Section intitulée how-does-it-workHow does it work ?

WebGl development is driven by a sharing community. Developers like to prototype, share and help each other. There are a lot of tutorials or lessons available (mostly about « how to code for WebGL » and not so much about « 3D design for WebGL »). More than code, developers are also sharing articles about their thinking process, their experimentations. It helps the technology emerge in terms of popularity and good practices.

For this article prototype, I’ve used a high-level JavaScript library, three.js. There are others like babylon.js or claygl. I modelized and animated with Blender software and used Khronos exporter from Blender to GLTF.

It represents the 3D version of JoliCode website's homepage. Here is the 🎉 github page 🎉 and source code.

Three.js have an active community and many users and contributors. Here is the basic code (in JavaScript) to create a scene with a rotating cube:

var camera, scene, renderer;

var geometry, material, mesh;

init();

animate();

function init() {

camera = new THREE.PerspectiveCamera( 70, window.innerWidth/window.innerHeight, 0.01, 10 );

camera.position.z = 1;

scene = new THREE.Scene();

geometry = new THREE.BoxGeometry( 0.2, 0.2, 0.2 );

material = new THREE.MeshNormalMaterial();

mesh = new THREE.Mesh( geometry, material );

scene.add( mesh );

renderer = new THREE.WebGLRenderer( { antialias:true } );

renderer.setSize( window.innerWidth, window.innerHeight );

document.body.appendChild( renderer.domElement );

}

function animate() {

requestAnimationFrame( animate );

mesh.rotation.x += 0.01;

mesh.rotation.y += 0.02;

renderer.render( scene, camera );

}[technical alert 🔧] We’ll try to explore WebGL functioning in the simplest way 🤓.

WebGL uses a low level language and coding can be quite wordy. As we saw, there are libraries helping the development process. Nevertheless, it’s always good to know how it works behind the scene.

Everything is based on a series of step: the rendering pipeline. There are two languages used:

- GL Shader Language to communicate with GPU. « GLSL syntax is based on the C programming language. It was created by the OpenGL ARB (OpenGL Architecture Review Board) and give developers more direct control of the graphics pipeline without having to use ARB assembly language or hardware-specific languages. » Source.

- JavaScript to control the context, data arrays, buffer objects (vertex and index), compiled shaders, attributes, uniforms (global variables) and matrices.

GLSL is used to create shaders programs. They are composed of two parts: the vertex shader and the fragment shader. They are called every time something need to be draw and they are executed in parallel by the GPU 🏃. It allows better performances.

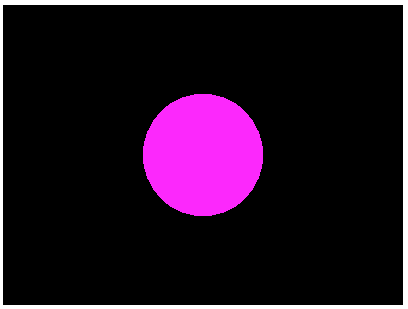

Here is the Shader version of an « Hello World »:

vertex shader

void main() {

gl_Position = projectionMatrix *

modelViewMatrix *

vec4(position,1.0);

}fragment shader

#ifdef GL_ES

precision mediump float;

#endif

void main() {

gl_FragColor = vec4(1.0, // R

0.0, // G

1.0, // B

1.0); // A

}Result apply on a sphere:

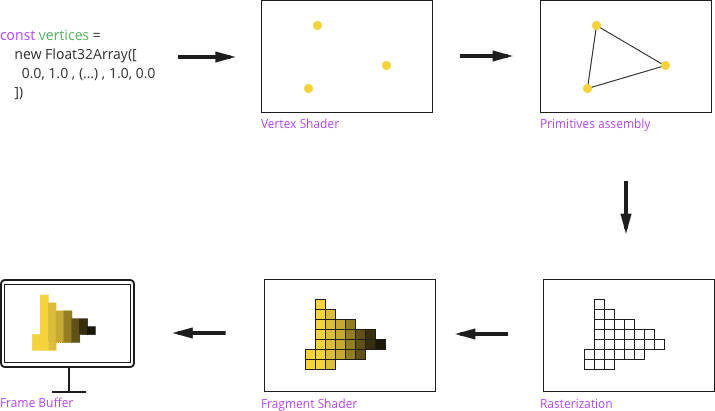

The rendering pipeline:

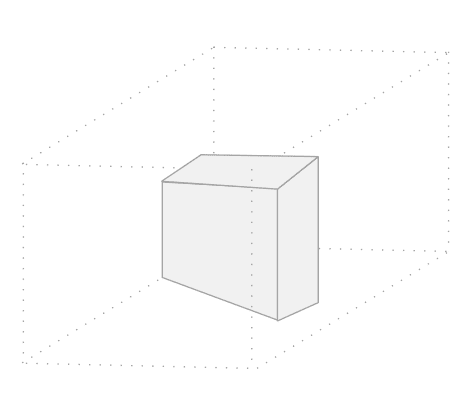

- Step 1 (programmable) – Create the data representing the geometry (JavaScript) and pass it to shaders (GLSL).

- Step 2 (programmable) – The data is fed to a vertex shader and the rendering process starts, each vertex position is calculated and stored along with its color and textures coordinates.

- Step 3 – Primitives are assembled (WebGl primitives are lines, points and triangles). This step creates vectorial shapes for the geometry;

- Step 4 – Rasterization, where pixels are mapped from the primitives. Every not visible primitives or out of the view area are discarded. Vertex attributes are interpolated across the pixel they enclose;

- Step 5 (programmable) – Fragment shader takes input from the vertex shader and the rasterization stage and calculates the color for each pixel. Other calculations can be done after determining the color;

- Final step – The image is displayed on the 2D screen and the frame buffer (part of graphic memory) hold the scene data.

Extract from WebGL fundamentals:

There are 4 ways a shader can receive data:

Attributes and Buffers: Buffers are arrays of normalized data you upload to the GPU. Usually buffers contain things like positions, normals, texture coordinates, vertex colors.

Attributes are used to specify how to pull data out of your buffers and provide them to your vertex shader.

Uniforms: Uniforms are effectively global variables you set before you execute your shader program.

Textures: Textures are arrays of data you can randomly access in your shader program. The most common thing to put in a texture is image data but textures are just data and can just as easily contain something other than colors.

Varyings: Varyings are a way for a vertex shader to pass data to a fragment shader. Depending on what is being rendered, points, lines, or triangles, the values set on a varying by a vertex shader will be interpolated while executing the fragment shader.

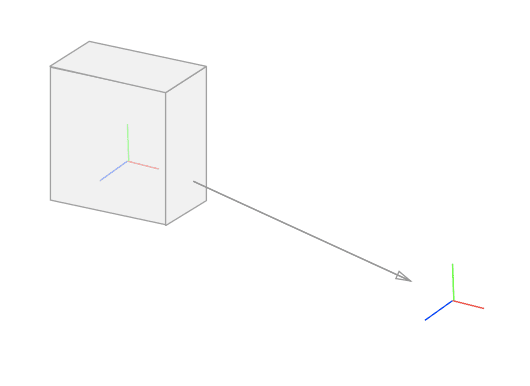

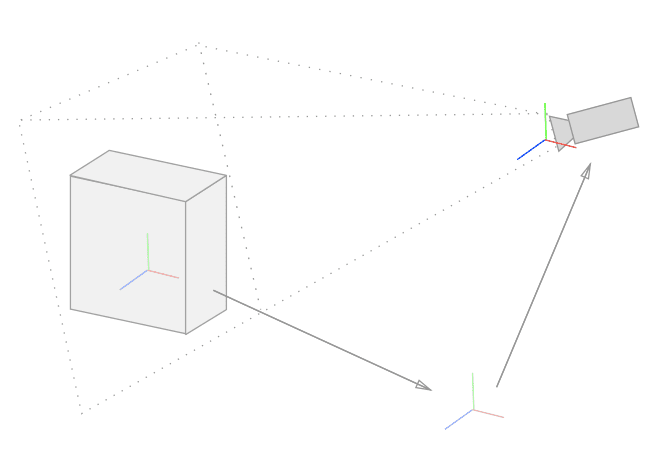

At this point, we may wonder, where is 3D in all of this? Well, it’s not magical, we need to calculate it. As we saw, WebGL is a rasterization tool. We go from coordinates defined in a space to a result on a screen. Our data must be brought to the WebGL space also called clip space coordinate system.

This space is 2 units wide. We can visualize it as a cube going from –1 to 1 in three dimensions with a center of (0,0,0). It does not take any other factor into account such as screen ratio. The vertex shader returns calculated position into this space through a variable called gl_Position. To do so, positions are multiplied by several matrices:

- Model matrix: translate the object reference coordinates to the WebGL 3D space (at first, vertices origin is based on the object center, after that calculation, they are based on the 3D WebGL word origin);

- View matrix: translate the origin of the vertices coordinates to a point that will be considered as a camera coordinates;

- Projection Matrix: Used to introduce the perspective notion. The farthest we are, the smaller objects are.

To get further into the code, there are plenty of tutorials such as:

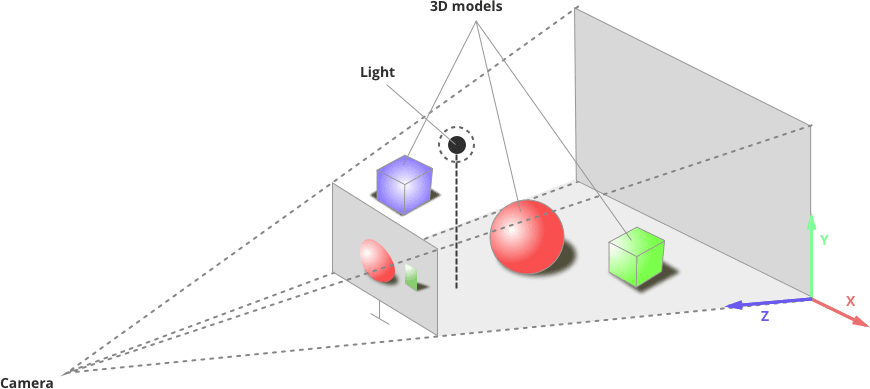

Vocabulary around WebGl has a lot in common with CAD software one. You create a scene, use lights, a camera and add objects with mesh (geometry + material) and play with textures.

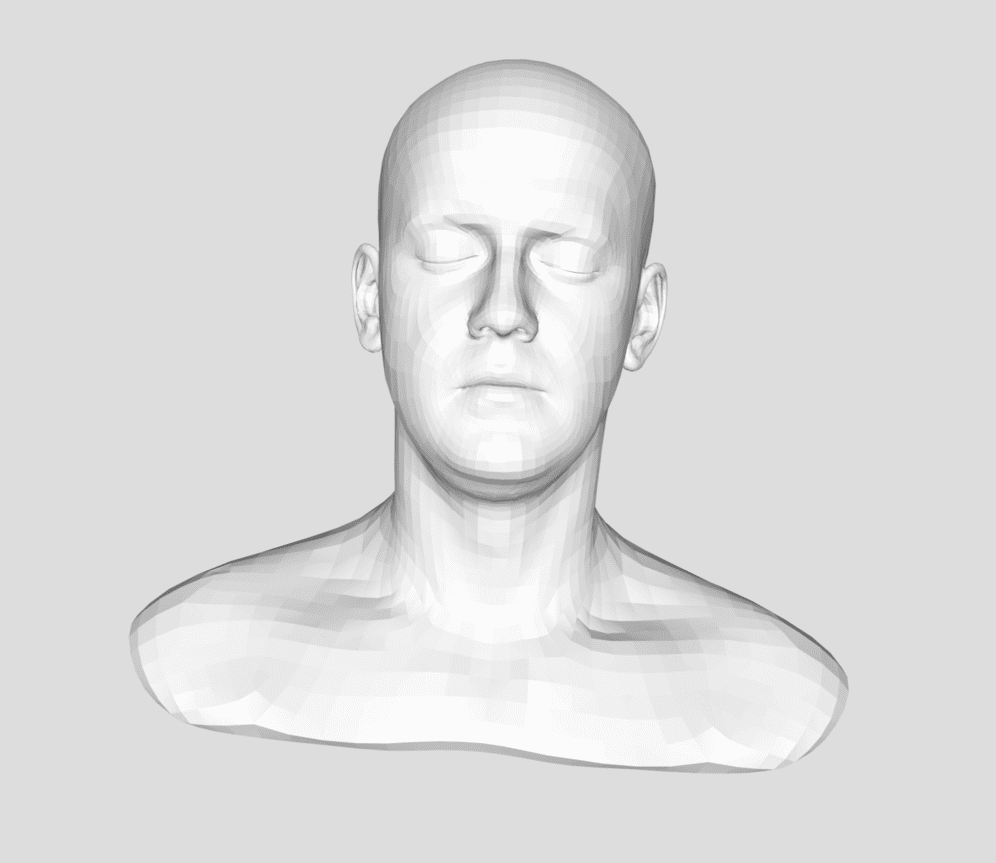

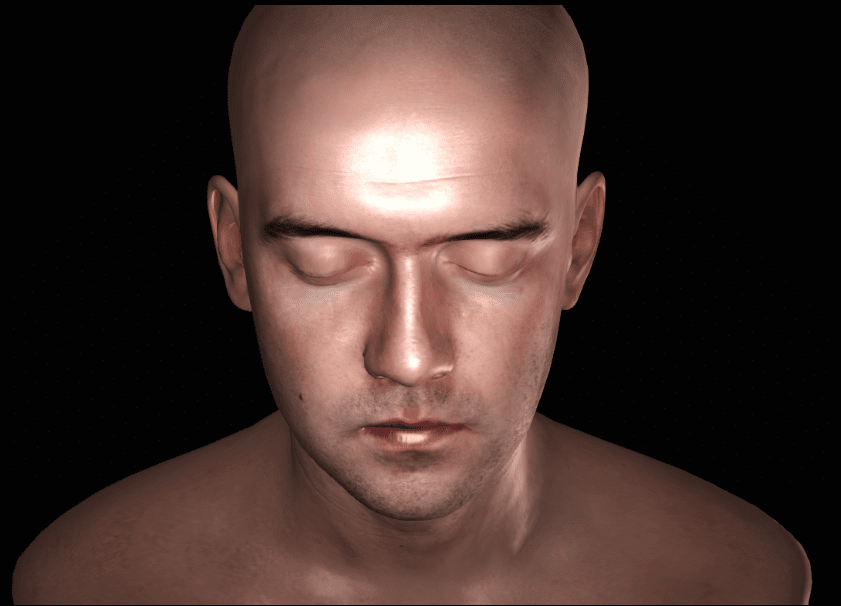

Meshes are several sets of coordinates: the position coordinates (x, y, z), through horizontal, vertical and depth axes and the texture coordinates (u, v) telling how an image texture will apply on the mesh and how light will react.

All those coordinates can be set directly in the code or imported from a CAD software exported file. Optimizing those coordinates is essential for performance.

Images are used as textures. They are mapped over the mesh to define the color, the lights, the shadows and reflections.

- Initial mesh

- Result

All of this is rendered in the browser and animated following a number of frames per second (FPS). WebGL animations are not different from any other Web animations, our canvas changes through time and is redrawn. We’ll use the requestAnimationFrame() method. The computer frame rate is most of the time 60 frames per second but it can be different depending on the computer capacities.

[👷 Tip of the day] It’s possible to animate through time and get rid of the frame rate rythme like so:

var previousTime = 0;

requestAnimationFrame(animate);

// Draw the scene.

function animate(now){

// Convert the time to seconds

now \*=0.001;

// Subtract the previous time from the current time

var deltaTime = now - then;

// Remember the current time for the next frame.

previousTime = now;

}Using a library can be really helpful. Evaluate the needs / capacities before making a choice between native and libraries is important. Indeed importing a big amount of files is sometimes useless to do simple stuff.

There are other frameworks and microprocessor (closer to native) and if you want you can also get deep into native.

Shaders galleries are also disponible to see examples, inspirations and tricks:

WebGL is not the only way to do 3D on Web. WebGl alternatives exist too such as Metal for Mac and Direct X for Windows.

The technology comes from OpenGl used in Openframework or Processing to go further than a browser.

Game engines are also getting into the 3D in-browser game, Unity had a Web player but it is now redirecting to WebGL export. Unreal engine 4 has some browsers exports too.

Section intitulée time-to-shineTime to shine ! 🌟

To conclude, WebGL is a rising tech in front end development. Developers are more and more comfortable with it. There is still a lot to learn and standards to fix but isn’t it always the case?😉

The design part for a WebGL project needs more documentation and unicity. Indeed it has inherited from the plurality of licensed CAD software. However, it is evolving in a positive way.

WebGL is cool and Wow for sure but don’t forget basics. The more visual effects you add, the more capacities are needed. It could be sad to create a project working only on the last gen computers.

On mobile, those capacities are also reduced but nothing is impossible (this Winter Rush demo is a good example). First step is pixel resolution. Augmented reality and WebGL 2.0 are pushing this way.

Performance and fluidity on a Web page are essential, UX is too. WebGL animations are trendy and challenging to implement but we must not fall into the « too-much » extreme. Innovating with new ways of interacting is good and has to be done in a smart and simple way.

Building and immersive, innovative and attractive website thanks to WebGL is possible. By avoid bad practices to keep portability and performance, the result is guaranteed. Furthermore, adding 3D in the design of apps and websites is a top tendency in 2018.

As we saw, WebGL is not just about 3D. Direct interactions with the GPU are undeniably good for performance, animations or even 2D backgrounds.

Next step is practicing and sharing with the community to improve.

Commentaires et discussions

Making 3D 🗿 for the Web

In 2018, 3D in a website is trendy. However, UX and performance are also top priorities of the moment. Our goal: create great experiences for users through fluidity, immersion and innovation. Sounds like a lot…

par Cécile Delmon

Nos articles sur le même sujet

Ces clients ont profité de notre expertise

L’équipe de Finarta a fait appel à JoliCode pour le développement de leur plateforme Web. Basée sur le framework Symfony 2, l’application est un réseau privé de galerie et se veut être une place de communication et de vente d’oeuvres d’art entre ses membres. Pour cela, de nombreuses règles de droits ont été mises en places et une administration poussée…

Nous avons entrepris une refonte complète du site, initialement développé sur Drupal, dans le but de le consolider et de jeter les bases d’un avenir solide en adoptant Symfony. La plateforme est hautement sophistiquée et propose une pléthore de fonctionnalités, telles que la gestion des abonnements avec Stripe et Paypal, une API pour l’application…

Nous avons accompagné le groupe Colliers dans la conception et le développement d’une application web, pensée pour leurs clients grands comptes. Cette plateforme permet à ces utilisateurs VIP d’accéder à des données de marché exclusives sur l’investissement immobilier et le marché locatif en Île-de-France. L’ensemble des données est présenté sous forme…